Digital privacy has long been a game of slow, incremental gains, but for Instagram users, the road has hit a sudden, definitive dead end. In 2019, Mark Zuckerberg famously pivoted the company toward a “privacy-focused vision,” promising a future where private messaging would be as secure as a whispered conversation. On May 8, 2026, that vision officially expires.

Meta has confirmed it will discontinue support for end-to-end encryption (E2EE) in Instagram direct messages, marking a massive structural shift in the global conflict between tech giants, regulatory mandates, and civil liberties. This is not a routine software update; it is a fundamental demolition of the private digital rooms Meta spent half a decade building.

As the cryptographic locks are removed, your “private” conversations are transitioning from secure silos to recorded broadcasts. Understanding the technical and legal machinery behind this reversal is essential for anyone who values a digital footprint that isn’t permanently archived on a corporate server.

Takeaway 1: The May 8 Deadline is a Hard Stop for Your Data

The transition scheduled for May 8, 2026, represents a “hard stop” for legacy data. Because true E2EE relies on unique cryptographic keys stored exclusively on your device, Meta cannot automatically port these “secure silos” into its new, unencrypted server architecture. Once the deadline passes, the infrastructure supporting these keys will be dismantled, risking the permanent loss of message history.

Users must take manual action to secure their history. To prevent data destruction, utilize the “Download Your Information” feature. Note that if you are running an older version of the Instagram app, you may be locked out of the retrieval tool entirely and must update to the latest build to initiate the export.

Actionable Steps for Data Recovery:

- Navigate to your Profile and open the Menu (three horizontal lines).

- Select Your Activity and tap Download your information.

- Request a backup of your messages in HTML or JSON format.

- Meta will email a link to a zip file containing your chats and media.

The irony is sharp: a platform update framed as “streamlining” effectively forces users to dump sensitive data onto local devices or watch it vanish into the digital ether.

“Very few people were opting in to end-to-end encrypted messaging in DMs, so we’re removing this option from Instagram in the coming months.” — Meta Spokesperson, statement to Engadget/Hacker News.

Takeaway 2: The “Low Adoption” Excuse vs. The Regulatory Reality

Meta’s official narrative—that “very few people” used the opt-in feature—is a convenient smokescreen for a much more aggressive regulatory environment. The company is currently retreating from a “nearly impossible technical dilemma”: the mathematical contradiction between absolute encryption and government-mandated “Chat Control.”

Meta has faced significant legal heat, notably from New Mexico Attorney General Raúl Torrez and the Nevada Attorney General, both of whom have attacked E2EE as “irresponsible.” These lawsuits argue that encryption prevents the detection of child sexual abuse material (CSAM) and “drastically impedes” law enforcement.

By stripping E2EE from Instagram, Meta sidesteps these complex legal battles and the looming threat of massive compliance fines under the UK’s Online Safety Act and the EU’s proposed scanning mandates. Sacrificing the privacy of the many has become the cost of doing business in a world of mandatory server-side sweeping.

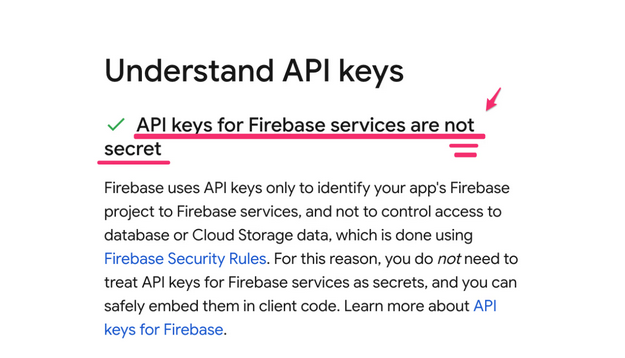

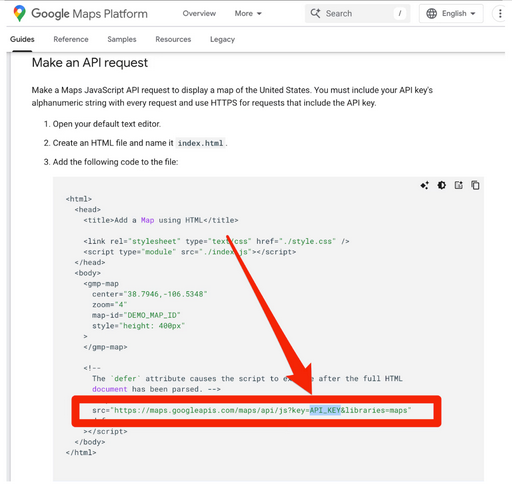

Takeaway 3: The Technical Shift from “Whispers” to “Recorded Broadcasts”

The removal of E2EE changes the technical nature of your messages from private whispers to recorded broadcasts. While Meta will continue to use Transport Layer Security (TLS), the distinction is critical:

| Feature | End-to-End Encryption (E2EE) | Transport Layer Security (TLS) |

|---|---|---|

| Who has the keys? | Only the sender and recipient. | Meta holds the keys at the destination. |

| Visibility | Content is invisible to Meta. | Meta decrypts and reads content on its servers. |

| Metadata | Meta tracks routing/participants. | Meta tracks routing, packet size, and session length. |

Under the new TLS-only model, Meta holds the keys to the “transit pipe.” While a hacker on public Wi-Fi cannot see your text, the message is decrypted, logged, and analyzed on Meta’s corporate servers before being re-encrypted for the recipient. Furthermore, even with “secure” pipes, the leakage of Metadata—packet bursts, session duration, and routing origin—allows the platform to build highly accurate behavioral models of your life without reading a single word.

Takeaway 4: Your Private Conversations are the New AI Training Ground

Without the cryptographic barrier of E2EE, Meta gains “frictionless” access to the core text of your conversations. This facilitates a deeper algorithm feedback loop. Historically, Meta had to infer interests from secondary signals (like shared links or metadata); now, it can parse your text directly.

This shift aligns with the 2025 policy update where interactions with Meta AI tools in private chats are harvested for targeted ads and AI training. The user experience of discussing a niche product in a DM and seeing a corresponding Reel minutes later is about to become more precise. Moving messages into a server-readable environment allows the platform to move from metadata-based guesses to literal text-based targeting.

Takeaway 5: The Migration to “Verifiable” Alternatives

For users who require confidentiality, the message is clear: migrate. However, the landscape of alternatives is fraught with technical “catches.” The basic requirement for trust in this new era is verifiable open-source code, as closed-source apps cannot be audited for “master keys.”

- Signal: The gold standard. Open-source, non-profit, and collects virtually zero metadata.

- WhatsApp: While it uses the Signal protocol, it is closed-source. You cannot verify if a backdoor exists, and Meta aggressively harvests metadata to map your social graph.

- Telegram: A popular choice, but a major caveat exists—E2EE is not the default. You must manually initiate a “Secret Chat” for any real privacy.

- iMessage: Strong in a vacuum, but suffers from fragility. The moment a non-Apple user enters a group chat, the encryption breaks, and the session degrades to unencrypted protocols.

Conclusion: The Future of the “Private Room”

The May 8, 2026 deadline marks a definitive policy reversal for Meta and a retreat from the centralized platform’s brief experiment with true user sovereignty. By reclaiming the keys to the kingdom, Meta is re-establishing the model of the centralized corporate server as the ultimate arbiter of private speech.

As we move toward a future of decentralized models and personal smart contracts, we must confront a pressing question: Can a “private” digital space truly exist when the platform hosting it holds the keys to the door?NotebookLM can be inaccurate; please double-check its responses.